BY: Habeeb Adisa & Mustapha Lawal

Nigeria’s digital political ecosystem is currently embroiled in a controversy involving the chairman of the Independent National Electoral Commission (INEC), Professor Joash Ojo Amupitan, and a disputed account on X allegedly linked to him. What might have remained a routine allegation quickly escalated into a national conversation, not only because of claims that the account shared partisan content during the 2023 election cycle, but because an artificial intelligence system, Grok, was interpreted by users as confirming the account’s ownership history.

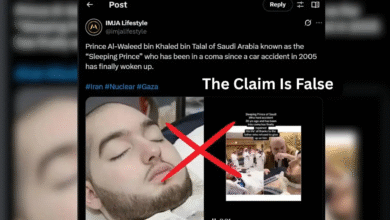

The controversy began after screenshots of posts allegedly linked to an account previously bearing the handle @joashamupitan resurfaced online. Critics argued that the posts suggested political support for President Bola Ahmed Tinubu, raising questions about the neutrality expected of Nigeria’s electoral umpire. INEC denied the claim, stating that the chairman does not operate any personal account on X and describing the allegation as misleading. Yet, as is often the case in the digital age, the denial did little to slow the momentum of the narrative once it had taken hold.

What transformed the episode from a typical social media dispute into a broader political controversy was the role played by artificial intelligence. Users turned to Grok, the chatbot integrated into X, asking it to investigate the origins of the account. Screenshots of its responses circulated rapidly, with many interpreting them as confirmation that the account had once belonged to the INEC chairman. Within hours, these AI-generated responses were being cited across online spaces as though they were the outcome of a verified digital investigation.

This reaction reflects a growing misunderstanding of how such systems function. Grok, like other large language models, does not independently verify claims or access private databases capable of confirming account ownership. Its outputs are generated from patterns in data, not from real-time forensic analysis. Yet in a fast-moving information environment, the distinction between generated responses and verified evidence can easily collapse, especially when the outputs align with existing political suspicions.

Beyond the role of artificial intelligence, the controversy exposes a deeper structural problem confronting fact-checkers and researchers: the increasing difficulty of verifying account ownership on X. Establishing who operates a specific account has become significantly more complex in recent years. Verification badges, once treated as signals of authenticity, can now be obtained through paid subscriptions, reducing their reliability as indicators of identity. At the same time, there is little publicly accessible metadata that allows independent investigators to confirm who controls an account. Usernames can be changed and reassigned, creating further ambiguity, while impersonation of public figures remains widespread.

In such an environment, even well-intentioned attempts to trace the origin of a claim can lead to uncertain conclusions. Fact-checkers are often left to rely on indirect methods, such as cross-referencing statements with official channels, analysing historical posting patterns, or seeking confirmation from credible sources. Where such corroboration is unavailable, attribution becomes difficult, and in some cases impossible, to establish with certainty. This creates a gap between what is claimed online and what can be conclusively verified.

The speed at which the INEC chairman X account controversy spread illustrates how this gap is exploited by the architecture of modern social media. Platforms like X reward immediacy and engagement, allowing claims to travel faster than the processes required to verify them. In this case, screenshots of Grok’s responses became portable “evidence”, shared across networks and interpreted as proof, even in the absence of verifiable backing. Additional claims, including suggestions that the account had been renamed, further fuelled speculation and extended the lifespan of the controversy.

INEC’s response, while clear, struggled to match the velocity of the narrative. The commission reiterated that the chairman does not operate a personal X account and noted that fake accounts impersonating him had previously been identified. However, once a claim reaches a critical threshold of visibility, institutional rebuttals often arrive too late to fully contain its impact. Public perception, shaped in real time by algorithms and networked amplification, begins to settle long before verification is complete.

The implications of this episode extend beyond a single disputed account. As Nigeria approaches the 2027 elections, the information environment is becoming increasingly complex. Artificial intelligence, social media dynamics, and weak mechanisms for digital identity verification are converging in ways that make it easier for unverified claims to gain traction and harder for truth to be established quickly. In this context, the challenge is no longer limited to detecting false information, but also to navigating situations where definitive verification may not be immediately possible.

For fact-checkers, this represents a significant shift. The task is not only to debunk false claims, but also to clearly communicate the limits of what can be verified. Where evidence is incomplete or attribution cannot be confirmed, that uncertainty itself must become part of the public record. Without this, the risk is that speculation, amplified by technology and interpreted as fact, begins to erode trust in institutions that depend on public confidence to function effectively.

Recent analyses have already warned about the growing risks posed by deepfakes and AI-driven disinformation ahead of Nigeria’s 2027 elections. What this incident shows is that the threat may be even more complex. It demonstrates that the threat extends beyond synthetic media to include the misinterpretation of AI systems themselves, where generated responses are mistaken for verified truth, and uncertainty is quickly replaced by conviction.