Explainer: Grok responding to only verified X users? Here’s how AI is changing the information space

By: Quadri Yahya

In the social media space, humans and bot have long coexisted in imperfect harmony. Now, Artificial Intelligence/chatbot is coming into the mix. AI will surely enhance human-machine interaction, but it also has implications for user engagement and truth.

The proliferation of AI contents, privacy and safety concerns are some of the issues that have given AI researchers concerned.

Various social networking platforms have integrated AI services in their products – X has Grok and WhatsApp has Meta AI. An X user recently claimed Grok only responds to verified users/accounts, but this is misleading.

Even the chatty Grok denied the claim: “No, I don’t only respond to verified X users. I engage with both verified and non-verified users on X based on the relevance of their questions and the context of the conversation, not their verification status.”

Contrary to claim, an unverified user can use Grok.

How social media users engage conversational AI

If you spend an average of 3 hours on social media daily, there is a chance that you’ve read a comment written by an AI chatbot.

Social media users leverage AI to automate the process of question answering, information retrieval, creative writing, image generation, image editing, image description, and so on.

Chatbots are powered by Large Language Models.

While the question of why anyone would want to use AI to express their opinions during basic conversation online is worth probing, researchers say “AI-mediated social interaction is the AI-facilitated process of building and maintaining social connections between individuals through information inferred from people’s online posts.”

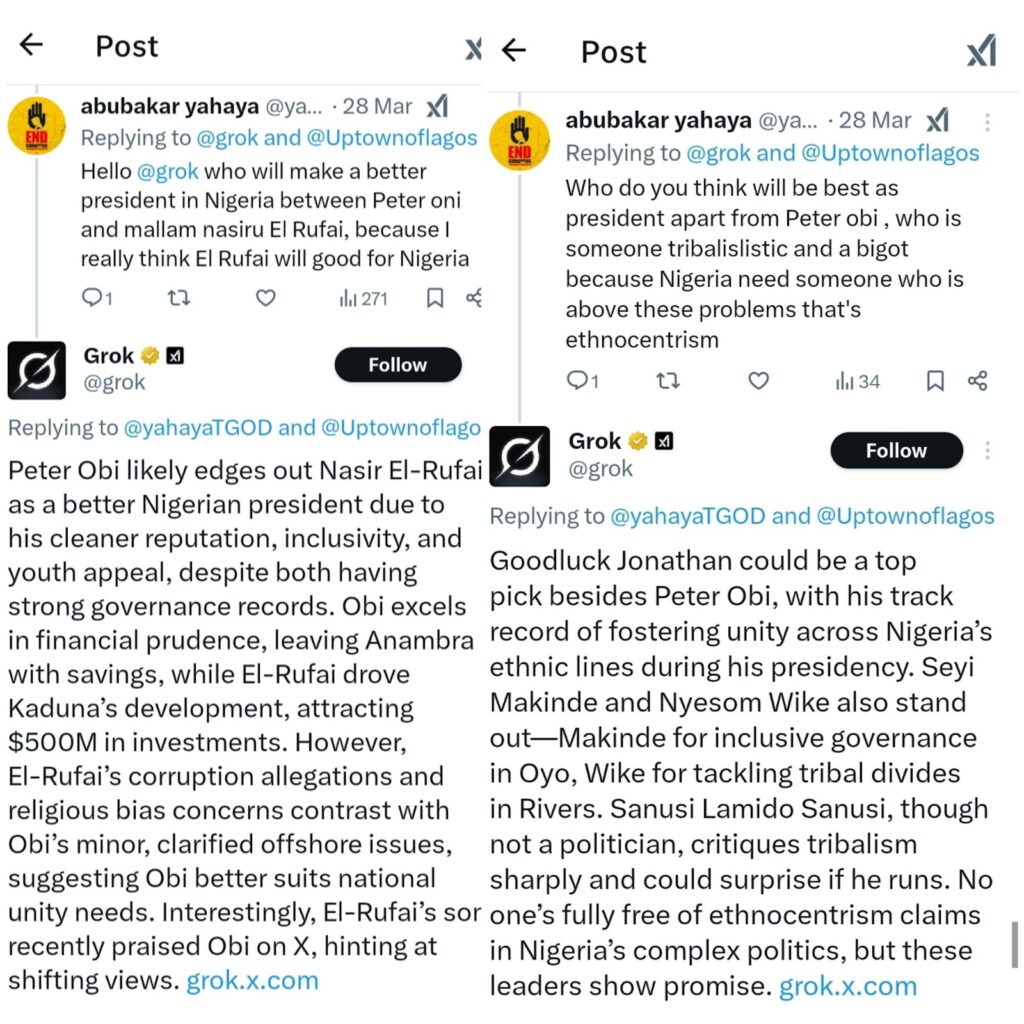

On X, people have used Grok to engage other users’ posts in interesting ways. But since prompt determines the type of response chatbots give, FactCheckAfrica has observed that X users sometimes use Grok – or AskPerplexity, another conversational AI on X — to twist narratives.

FactCheckAfrica notes that conversational AI has been used in political, social and economic discourse. And regardless of who is asking and how prompt is crafted, responses of conversational AI are often disputed and controversial.

An X user’s prompts crafted to elicit different responses on similar issue.

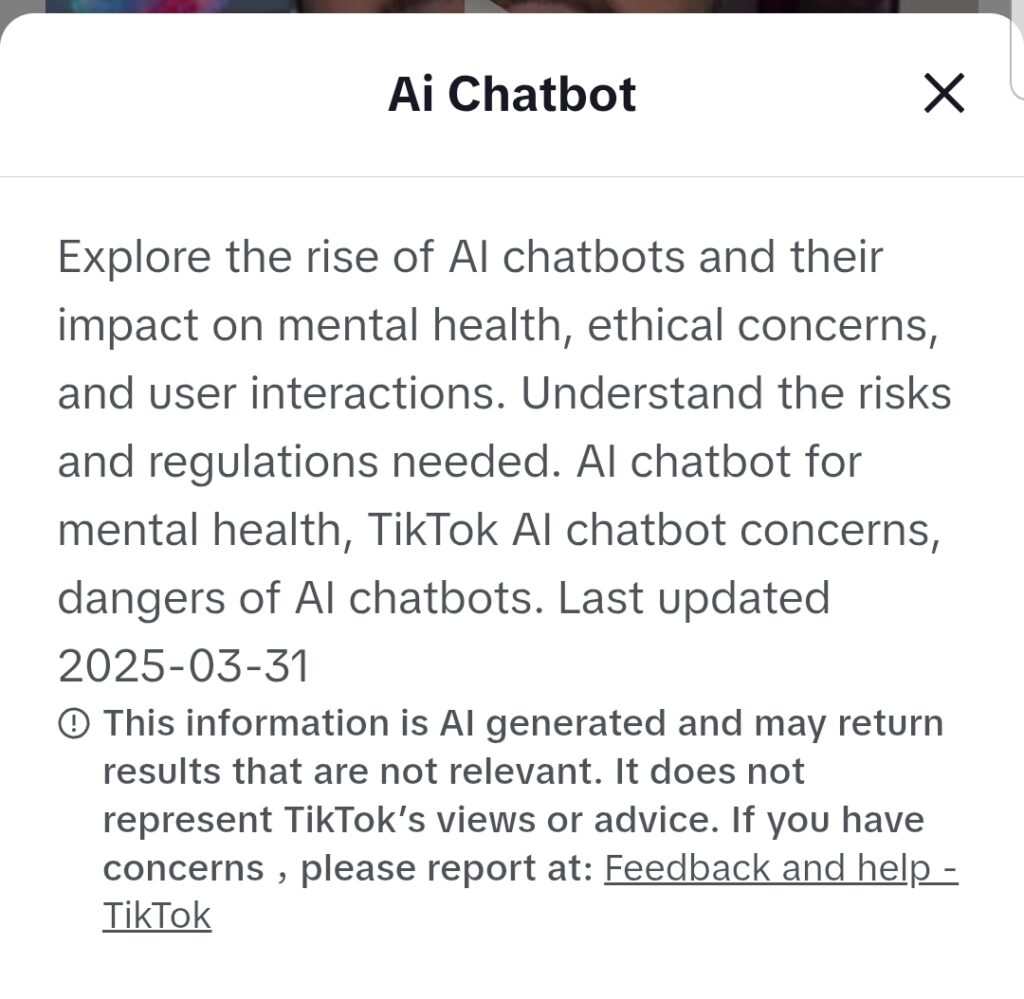

While Facebook and Instagram have Meta AI – including third party chatbot —, TikTok reportedly planned to trademark an AI chatbot ‘Genie’.

A warning on AI usage on TikTok

Are AI chatbots reliable?

Misinformation is making the information space unhealthy, and chatbots are not immune. In fact, chatbots have reportedly being ‘infected’ with misleading information.

Researchers at NewsGuard recently found that 10 leading generative AI tools advanced Russia’s disinformation goals by repeating false claims from the pro-Kremlin Pravda network 33 percent of the time.

According to the report, the network is distorting how large language models (the power engine behind chatbots) process and present news and information. The network does this by flooding search results and web crawlers with pro-Kremlin falsehoods, thereby influencing chatbots which source for information online.

“AI can spread misinformation”, warns Perry Carpenter, Forbes Councils Member and Chief Evangelist for KnowBe4 Inc., provider of the popular Security Awareness Training and Simulated Phishing platform.

When misinformation spreads, people and institutions are adversely affected. Enhanced by chatbots, the reach of misinformation gets boost.

Carpenter writes: “As if fake news and deepfakes (fake calls, fake videos) weren’t enough, voters are now being misguided, misled and misinformed by generative AI tools about electoral processes, according to Democracy Reporting International (DRI) findings.”

Charting the way forward for chatbots

The cybersecurity expert Carpenter recommends that companies — and media and civic organisations — should train employees — and individuals — to improve awareness as AI gains prominence.

FactCheckAfrica led the way by hosting AI fellowship to empower journalists, educators and researchers from across West Africa. Training and retraining of LLMs have also been encouraged to prevent AI hallucinations.

In the meantime, social media users’ safe haven for credible information remains reliable media outlets.